By William Doyle and Carlon Brown

The last two decades have seen a major shift in how people approach data. This began to seriously pervade the sports world as early as 2002, with the success of that year's Oakland Athletics.

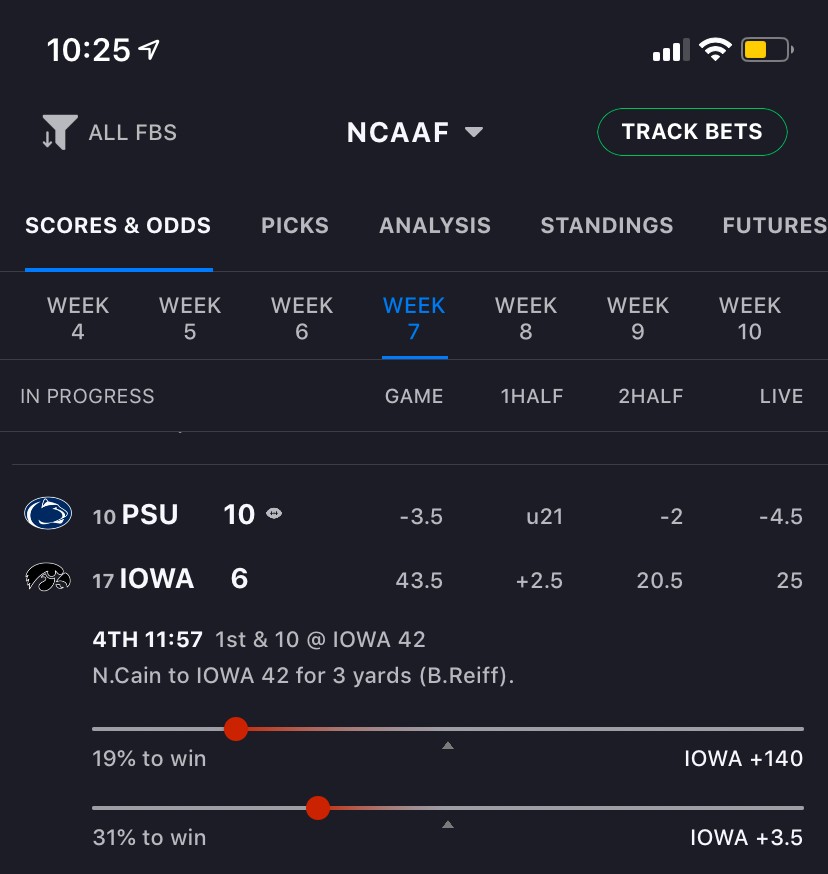

The next data invasion has already begun in our sports betting world, with live win probability in The Action Network app, PRO Systems, and more. It will continue to grow as sports betting becomes legal in more states.

The demand for sports betting related content, products and analysis continues to grow. This includes offerings for new leagues, such as the WNBA. The Action Network has seen an almost three-fold increase in the number of users who track WNBA picks in the app year over year.

The app already has win probability visualizations for point spread, moneyline and total bets for most other sports.

And due to the increased demand for WNBA betting content and analysis over the past year, we decided to make win probability models for it. It's not in the app yet, but we're hoping to get it live soon.